Tech Explained: Here’s a simplified explanation of the latest technology update around Tech Explained: The ethics that make human-AI agent collaboration work in Simple Termsand what it means for users..

AI systems outperform humans in tasks that require speed and large-scale data processing. However, these systems must work alongside human counterparts, and those people need to feel comfortable using them. Successful human-AI collaboration depends on several factors, but most specifically, it requires a clear set of ethical considerations to address the uneasiness and fears people have with these systems.

Organizations are deploying agentic AI to automate complex workflows and free team members for high-value tasks. Agentic AI often processes sensitive data, which heightens privacy risks as these systems become additional nodes that bad actors can exploit. The opacity of advanced AI decision-making also raises questions about transparency and accountability. Companies that embed strong AI governance practices can build sustainable operating models that increase productivity while maintaining compliance and protection against uncertainties.

IT and business executives must create ethical agentic AI frameworks that address trust and dependence, role clarity, bias and the emotional effects of AI if they hope to create agentic teams that function effectively and benefit from human-AI collaboration.

The ethics of agentic AI

Agentic AI refers to systems that act with agency and complete specific tasks with little oversight. They train using machine learning to make judgments based on previous outcomes and experiences, the same way a human would approach problem-solving.

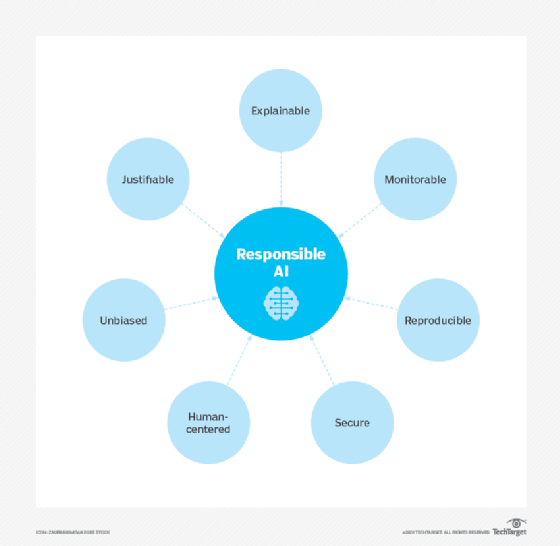

The shift from having a person in the loop to a fully autonomous operation makes agents powerful, but it also requires solid, proactive governance to address the following ethical concerns:

Transparency. The fundamental process of agentic AI decision-making follows a perceive-reason-act-learn loop. However, its nondeterministic behavior means it produces different results even with the same prompt. McKinsey senior fellow Michael Chui highlights that consistency matters in business, especially for compliance, since unclear explanations weaken stakeholder trust.

Accountability. When AI systems act independently, it becomes harder to identify who’s responsible. Since AI can’t be held accountable for itself or assess other AI, human evaluation and technological guardrails, such as rule-based systems, must monitor outputs.

Bias and fairness. Research from the Journal of Automation and Intelligence reveals that agentic systems inherit biases from the large language models they train on. Relying on flawed or unrepresentative data can exacerbate societal inequities and continue to affect automated decisions.

Privacy. The European Data Protection Supervisor warns that extensive, unavoidable access to consumer information makes it difficult to know what personal data AI collects, how it uses it or if new uses emerge as the AI pursues its goals.

Companies need clear guardrails to manage these concerns as AI adoption expands and new use cases emerge. G-P’s report “2025 AI at Work” shows that 91% of business leaders worldwide are actively scaling their AI strategies. But that doesn’t mean their implementations are sustainable.

To understand the scope and impact of AI, look at its applications across industries. In healthcare, systems like Google’s DeepMind analyze medical images to detect conditions, while human doctors make the final diagnoses and treatment decisions. In retail, AI personalizes shopping by suggesting products based on browsing and purchase behavior. Meanwhile, in finance, it offers financial planning and recommendations informed by market trends and user input.

However, these applications involve processing highly sensitive personal data. An exploitable AI that exposes this information could compromise individual privacy, violate regulations, damage organizational trust and make employees uneasy about using or working with it.

Key ethical considerations in human-AI collaboration

How employees view and use AI must evolve because this partnership affects the success of an agentic AI implementation. Leaders need to manage this relationship carefully to keep the technology aligned with organizational values. It’s important to consider the following ethical factors when implementing and operating with an agentic team:

Trust and dependence

Building trust between humans and AI agents is key to successful collaboration. Teams need to understand how agents operate to effectively manage them and ensure there isn’t overreliance on blind automation.

AI agents, while powerful, are fallible. Asana’s “State of AI at Work” report says that approximately six out of 10 workers found AI agents’ performance so unreliable that they consider them unusable. Unlike standard chatbots, agentic AI can produce incorrect answers in more complex and damaging ways. Since these systems operate autonomously, their errors can result from broken logic, inaccurate data or a lack of contextual understanding. Explainable AI methods let users understand the reasons behind an agent’s decisions. Monitoring systems can spot errors and enable team members to step in when needed.

Organizations should also apply the same quality assurance and training protocols to AI agents and human employees. A single platform for onboarding, evaluation and oversight keeps standards consistent and accountability clear. Businesses should provide agents with style guides, policies and data during onboarding to help ensure their responses are consistent with the company’s culture and mission.

Role clarity and collaboration

Ambiguity about decision-making and accountability undermines performance and trust. Organizations must clearly define the roles and responsibilities within human-AI teams to reduce confusion and ethical risks.

The human-in-the-loop (HITL) principle provides employees with oversight of critical judgments, such as moral determinations or in complex contexts. AI agents can handle the routine, well-bounded tasks and escalate nuanced or sensitive instances to human managers. In customer service, AI might resolve common inquiries but hand off emotionally complex or ambiguous issues to a live representative.

Organizations should design AI-human workflows that respect this balance. Automating decision-making without meaningful human input risks ethical lapses and stakeholder backlash. Strategic oversight will increasingly replace operational control as agentic AI matures, and humans will provide high-level guidance and only intervene when AI encounters uncertainty or ethical dilemmas.

Addressing bias and fairness

Data sets that contain bias can lead to unfair outcomes in decision-making. Users who interact with AI systems can unknowingly introduce their own prejudices, whether by providing skewed information or engaging with the system in a prejudiced way. These factors shape how agentic AI makes judgments, creating real-world risks.

A University of Southern California study highlights biases seen in AI applications across different fields. In the U.S. criminal justice system, Black defendants were often labeled high risk even without prior convictions. In healthcare, an AI tool predicting patient mortality rates showed partiality against Black patients. Gender discrimination is also evident. When users asked AI models to generate images of CEOs, they mostly produced images of men, reflecting the underrepresentation of women in such roles.

To address these issues, leaders should mandate regular bias audits and testing to uncover harmful patterns. Building diverse development teams helps find blind spots. Ongoing monitoring and adjustment based on real-world use are crucial to prevent AI from drifting toward unfairness.

Emotional impacts and workforce anxieties

Deploying autonomous systems alongside human team members affects operations and the people working with these systems. A study published in the journal Technological Forecasting and Social Change found that AI having agency and humanlike capabilities can trigger anxiety about job security.

Employees worried about being replaced by advanced robots might be reticent to engage fully with AI agents. This fear can lower productivity and increase turnover even if companies have no plans to reduce their workforce. Organizations must provide open communication and opportunities for skill development. Training programs should highlight how AI enhances human work instead of replacing it and frame AI as a collaborator, not a competitor.

It’s important to support employees emotionally through the agentic AI transition to reduce their fear of replacement. AI remains a tool, and continuous learning ensures workers make valuable contributions as their roles evolve alongside new technology.

Zac Amos is a freelance tech writer specializing in AI, cybersecurity and business tech. He is also the Features Editor at ReHack Magazine, and he has bylines on publications like VentureBeat, TechRepublic and Forbes. For more of his work, follow him on LinkedIn.